Voxel engines are everywhere…

…and given the huge success of Minecraft, I think they are going to become a permanent fixture in the landscape of game engines. Minecraft (and Infiniminer) live in a unique space somewhere between high-resolution voxels and static prefabricated environments. Modifying a Minecraft world is both intuitive and elegant; you simply pick up and place blocks. In many ways, it is the natural 3D generalization of older 2D tile based games. Of course creating a Minecraft engine is much more challenging than making a 2D engine for a number of reasons. First of all, it is in 3D and second it is generally expected that the environments must be much more dynamic, with interactive lighting, physics and so on.

When building a voxel game, it is important to choose a data structure for representing the world early on. This decision more than any other has the greatest impact on the overall performance, flexibility, and scale of the game. This post discusses some of the possible choices that can be made along these lines, and hopefully give a better explanation of what sort of technical considerations are involved. In fact, I posit that some commonly held performance assumptions about Minecraft-type engines are probably wrong. In particular, the importance of random-access reads/writes for modifications is often overestimated, while the relative cost of iteration is greatly underestimated. In concluding this article, I introduce a new data structure that exploits this observation, and ultimately gives much faster iteration (for typical Minecraft-esque maps) at the expense of slightly slower random accesses.

Conventional data structures

But before getting ahead of ourselves, let’s review some of the approaches were are currently being used to solve this problem. We start with the absolute simplest choice, which is the “flat array”. In the flat array model, you simply store all of your level data in one gigantic array:

As Minecraft and Infiniminer haver shown us this strategy can be viable — providing you limit the number of voxel types and make the world small enough. Storing blocks in a big array has many advantages, like constant time random access reads and writes, but it is far from perfect. The most obvious downside is that the amount of memory consumed grows with the cube of the linear dimension, and that they are ultimately fixed in size. These properties make them suitable for small, sand-box worlds (like the old-school Minecraft creative mode), but prevent them from being used in an infinite world (like Minecraft survival mode).

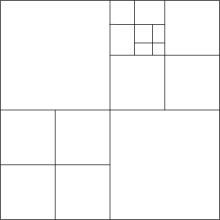

The most naive solution to the perceived disadvantages of flat arrays (and I say this because it is almost invariably the first thing that anyone suggests) is to try putting all the voxels in an octree. An octree is a tree that recursively subdivides space into equal sized octants:

On the face of it, this does sound like a good idea. Minecraft maps are mostly empty space (or at least piecewise constant regions), and so removing all those empty voxels ought to simplify things quite a bit. Unfortunately, for those who have tried octrees, the results are typically not so impressive: in many situations, they turn out to be orders of magnitude slower than flat arrays. One commonly cited explanation is that access for neighborhood queries times in octrees are too slow, causing needless cache misses and that (re)allocating nodes leads to memory fragmentation.

However, to understand why octrees are slower for Minecraft games, it isn’t really necessary to invoke such exotic explanations. The underlying reason for the worse performance is purely algorithmic: each time an arbitrary voxel is touched, either when iterating or performing a random access, it is necessary to traverse to the entire height of the octree. Since octrees are not necessarily balanced, this can be as much as log(maximum size of game world)! Assuming the coordinate system is say 32 bit, this brings an added overhead of 32 additional non-coherent memory accesses per each operation that touches the map (as opposed to the flat array, where everything is simply constant time).

A more successful method — and even less sophisticated — method is to use paging or “virtual memory” to expand the size of the flat array. To do this one breaks down the flat 3D array into a collection of pages or “chunks”, and then uses a hash table to sparsely store only a subset of the pages which are currently required by the game:

Pages/chunks are typically assigned in the same way as any virtual memory system: by reserving the upper -bits of the coordinate for the chunk ID. Using a virtual memory system has the additional advantage that chunks can be lazily initialized, which is great for games with procedural content. The same concept also allows chunks to be mapped back to disk when they are not needed (that is when there are no players nearby). Using a hash map to store the chunks allows one to maintain constant time random access, while simultaneously taking advantage of sparsity as in an octree.

It seems like the current direction of Minecraft engines has begun to converge on the “virtualization” approach. (And I base this claim on numerous blog posts). But is this really the best solution? After all, before we jump in and try to solve a problem we should at least try to quantitatively understand what is going on first; otherwise we might as well be doing literary criticism instead of computer science. So I ask the following basic question:

What factors really determine the performance of a voxel engine?

There is a superificial answer to this question, which is “read/write access times”; after all every operation on a Minecraft map can be reduced to simple voxel queries. If you accept this claim, then a virtualization is optimal — end of story. However for reasons which I shall soon explain, this assertion is false. In fact, I hope to persuade you that really it is the following that is true:

Claim: The main bottleneck in voxel engines is iteration — not random access reads and writes!

The basic premise hinges on the fact that the vast majority of accesses to the voxel database come in the form of iterations over 3D ranges of voxels. To demonstrate this point let us first consider some of the high level tasks involving the world map that a voxel engine needs to deal with:

- Placing and removing blocks

- Collision detection

- Picking/raytracing

- Lighting updates

- Physics updates

- Mesh generation

- Disk serialization

- Network serialization

The last two items here could be considered optional, but I shall leave them in for the sake of completeness (since most voxel games require some level of persistence, and many aspire at least to one day be multiplayer games). We shall classify each task in terms of whatever operations are required to implement it within the game loop, which are either read/write and random access/iteration. To measure cost, we count the number of raw voxel operations (read-writes) that occur over some arbitrary interval of gameplay. The idea here is that we wait some fixed unit of time (t) and count up how many times we hit the voxels (ie the number of bytes we had to read/write). Under the assumption that the world is much larger than cache (which is invariably true), these memory operations are effectively the limiting factor in any computation involving the game world.

At a fairly coarse level, we can estimate this complexity in terms of quantities in the game state, like the number of MOBs and players in the world, or in terms of the number of atomic events that occur in the interval, like elapsed frames, chunk updates etc. This gives an asymptotic estimate for the cost of each task in terms of intelligible units:

| Task | Operation | Cost (in voxel ops) |

| Block placement | Write | number of clicks |

| Collision check | Read | (number of MOBs) * (frame count) |

| Picking | Read* | (pick distance) * (frame count) |

| Lighting | Iteration (RW) | (torch radius)^3 * (number of torches) + (active world size) * (sun position changes) |

| Physics update | Iteration (RW) | (active world size) * (frame count) |

| Mesh generation | Iteration (R) | (chunk size) * (chunk updates) |

| Disk serialization | Iteration (R) | (active world size) * (save events) |

| Network IO | Iteration (R) | (active world size) * (number of players) + (chunk size) * (chunk updates) |

Our goal is to pinpoint which operation(s) in this chart is the bottleneck, and to do so we need dimensional analysis to convert all our units into a common set of dimensions. Somewhat arbitrarily, we choose frame count and number players, and so our units will be in (voxel ops * frames * players). Going through the list of each item, we now try to estimate some plausible bounds for each dimension:

- (number of clicks) is the amount of times the user clicked the mouse in the interval. Since a user can click at most once per frame (and in practice is likely to click much much less), this value is bounded above by:

- (number of clicks) < (frame count) * (number of players)

- (number of MOBs)is the number of active entities, or movable objects in the game. Assuming that they are not moving too fast (ie there is a maximum speed of a constant number of blocks per frame), then the number of reads due to a collision check is going to be proportional to the number of MOBs and the number of frames. We assume that number of MOBs in the world is proportional to the number of players, according to some constant

,

- (number of MOBs) =

* (number of players)

- (number of MOBs) =

- (pick distance) is the maximum range a block can be selected by a player, and we shall assume that it is just a small constant.

- Again, (torch radius) is a small number. In Minecraft, this could theoretically be as high as 16, but we will simply leave it as a tiny constant for now.

- (number of torches)is the number of torches placed / removed during the interval. Torch placement is generally an irregular event, and so we estimate that a player will place a torch somewhat infrequently, at a rate goverened by some constant

,

- (number of torches) = (number of players) * (frame count) *

- (number of torches) = (number of players) * (frame count) *

- (sun position changes)is the number of times that the sun changes. This happens regularly every few frames, and so we estimate that,

- (sun position changes) = (frame count) *

- (sun position changes) = (frame count) *

- (active world size)is the size of the world. It is basically proportional to the number of chunks visible by each player. Assuming a Minecraft style world with a height cap, this would vary quadratically with the visible radius,

, or cubically for a world with unlimited z-value.

- (active world size) = (number of players) * (chunk size) *

- (active world size) = (number of players) * (chunk size) *

- (chunk updates) occur whenever a chunk changes. Assuming this is random, but proportional to the size of the active world, we get

- (chunk updates) = (active chunk size) / (chunk size) *

- (chunk updates) = (active chunk size) / (chunk size) *

- (save events) occur at regular intervals and save the map to disk. Again, because they are regular we can just bound them by a constant times the number of frames.

- (save events) = (frame count) *

- (save events) = (frame count) *

Plugging all that in, we get the following we get the following expression for the total amount of random access voxel ops:

(random voxel ops) = C * (number of players) * (frame_count)

And for the sequential or iteration voxel ops, we get:

(sequential voxel ops) = C * (number of players) * (frame count) * (chunk size) *

In general, the last quantity is much larger, by a factor of (chunk size) * , or (chunk size) *

if you have unlimited height. So, if you believe these estimates are reasonable, then you should be convinced that iteration by far dominates the performance of a Minecraft style game. In fact, this estimate explains a few other things, like why visible radius is such an important performance factor in Minecraft. Increasing the draw radius linearly, degrades the performance quadratically!

Can we make iteration faster?

The main conclusion to draw from the above estimate is that we should really focus our optimization efforts on iteration. If we can bring that cost down measurably, then we can expect to see large improvements in the game’s performance. But the question then becomes: is this even possible? To show that at least in principle it is, we make use of a simple observation: In any situation where we are iterating, we only need to consider local neighborhoods around each cell. For example, if you are computing a physics update (in minecraft) you only consider the cells within your Moore neighborhood. Moreover, these updates are deterministic: If you have two voxels with the same Moore neighborhood, then the result of applying a physics update to each cell will be the same. As a result, if we can iterate over the cells in groups which all have the same neighborhood, then we can apply the same update to all cells in that group at once!

This is the basic idea behind our strategy for speeding up iteration. We will now try to explain this principle in more detail. Let be the set of block types, and let

be a map representing our world (ie an assignment of block types to integral coordinates on a regular grid). A radius

-Moore neighborhood is an element of the set

. The Moore neighborhood of a coordinate

in

is determined by a map,

such that,

Then an update rule is a map sending a Moore neighborhood to a block, , and the application of

to

is the rule sending,

Then the fact that we can group cells corresponds to the observation that:

So, if our world data allows us to group up similar Moore neighborhoods, then we can expect that on average we should be able to reduce the complexity of iteration by a substantial amount.

In fact, this same idea also applies to meshing: If you have a sequence of adjacent cubes that all have the same 3x3x3 neighborhood, then you can fuse their faces together to make a smaller mesh. For example, instead of generating a mesh for each cube one at a time:

We can fuse cells with the same Moore neighborhood together:

So if we can iterate over the cells in batches, we should expect that not only will our physics loop get faster, but we should also expect that mesh quality should improve as well!

Run length encoding and interval trees

At this point, we now have some pretty good intuition that we can do iteration faster, so the obvious next step is to figure out exactly how this works. Here is one strategy that I have used in a simple javascript based Minecraft game (which you can get here). The idea follows from the simple observation that Minecraft style worlds can be easily run-length-encoded. In run length encoding, one first flattens the 3D array describing a chunk down to a 1D string. Then, substrings of repeated characters or “runs” are grouped together. For example, the string:

aaaaabbbbacaa

Would become:

5a4b1a1c2a

Which is a size reduction of about 25%. You can imagine replacing the characters in the string with voxel types, and then compressing them down this way. Now, applying run-length-encoding to chunks is by no means a new idea. In fact, it is even reasonable to do so as a preprocessing step before g-zipping the chunks and sending them over the network/spooling to disk. The fact that run-length encoding does compress chunks works to our advantage: We can use this fact to iterate over the voxel set in units of runs instead of units of pixels, and can easily be extended to walk runs of Moore neighborhoods as well.

This sounds great for iterations, but what about doing random-access reads and writes? After all, we still need to handle collision detection, block placement and raytracing somehow. If we were dumb about it, just accessing a random block from a run-length-encoded chunk could take up to linear time. It is obvious that we shouldn’t be happy with this performance, and indeed there is a simple solution. The key observation is that a run-length encoding of a string is formally equivalent to an interval tree representation.

An interval tree is just an ordinary binary tree, where the key of each node is the start of a run and the value is the coordinate of the run. Finding a greatest lower bound for a coordinate is equivalent to finding the interval containing a node. To do insertion is a bit trickier, but not by much. It does involve working through a few special cases by hand, but it is nothing that cannot be done without taking a bit of time and a pad of paper. If we implement this data structure using some type of self-balancing binary tree (for example a red-black tree or a splay tree), then we can perform random reads and writes in logarithmic time on the number of runs. Best of all: we also get improved mesh generation and in-memory compression for free!

Now in practice, you would want to combine this idea with virtualization. Basically, you would store each chunk as an interval tree, then represent the entire world as a hash map. The reason for doing this would be to integrate paging and chunk generation more seamlessly. In fact, this is the method I took in the last two minecraft type games that I wrote, and you can find some example code illustrating this data structure right here.

Comparisons and conclusion

Ok, now that we’ve gone through the details, let’s break down our options by data structure and time complexity:

| Data structure | Random access time | Iteration time | Size | Notes |

| Flat array | ||||

| Virtualized array | ||||

| Octree | ||||

| Interval tree + Hash table |

Under our previous estimates, we can suppose quite reasonably that an interval tree should outperform a virtualized array for typical maps. (Of course this claim goes out the window if your level geometry is really chaotic and not amenable to run-length encoding). To test this hypothesis, I coded up a pretty simple benchmark. At the outset the map is initialized to a 256x256x256 array of volume, with 5 different layers of blocks. Randomly, 2^16 blocks from 32 different types are sprinkled around the domain. To assess performance, we measure both how long an average random read takes and how long a sequential iteration requires (for Moore radii of 0 and 1). The results of the benchmark are as follows:

| Data structure | Avg. random read | Avg. sequential read (Moore radius = 0) | Avg. sequential read (Moore radius = 1) |

| Flat array | 0.224 μs/read | 0.178 μs/read | 0.060 μs/read |

| Virtual array | 0.278 μs/read | 0.210 μs/read | 0.107 μs/read |

| Octree | 2.05 μs/read | 0.981 μs/read | 0.933 μs/read |

| Interval tree + hashing | 0.571 μs/read | 0.003 μs/read | 0.006 μs/read |

In each column, the best result is underlined in bold. There are a few things to take home from this. First, for random access reads and writes, nothing beats a flat array. Second, octrees are not a very good idea for voxel worlds. On all accounts, a virtual array is far superior. Finally, while the access time for random reads is noticeably slower in an interval tree, the speed benefits of improved sequential iteration make them more than worthwhile in practice. The code for the benchmark can be found here.

Of course, interval trees will not perform well in worlds which are more-or-less random, or if there are not many runs of similar blocks that can be grouped together. But when your world can be described in this way (which is certainly true of a Minecraft like game), switching to intervals can offer a huge improvement in performance. I also firmly believe that there is still quite a bit of room for improvement. If you have any suggestions or ideas, please leave a comment!

Great article.

I have some disagreements on some of your assumptions based on what constitutes a minecraft engine. I think your main points still stand but that you’ve heavily over emphasizing the importance and frequency of iteration.

1. Assumed physcs approach isn’t something realistically used.

Physics update – Iteration (RW) – (active world size) * (frame count)

From a performance perspective this would be a suicidal way to approach physics. Having lists (based on simulation types) of active blocks works great. The active list can be added to by the physics sim, and is added to by block placement/removal events. It is initialized on chunk load by a full iteration of the block contents, but that’s a once off.

This moves it into the random access category of:

(active world size) * (frame count) * k

Where k is a *very* small constant, as the proportion of active blocks at any one time is tiny relative to the active world size.

2. Assuming lighting updates on sun position changes isn’t justified.

I don’t believe the (active world size) * (sun position changes) portion of lighting is justified.

Assuming you have sun position changes this is fine, but I don’t think this is common enough to be included as an assumed part of any Minecraft engine, especially as Minecraft itself doesn’t do this.

While this can lead to major graphics improvements, and I’ve seen some engines using this kind of approach, I don’t believe it’s fair to include it in an article targetting the generic minecraft engine.

This leaves (torch radius)^3 * (number of torches). I don’t think this is an accurate measure, since lighting is something recalculated on change, we should probably base it off something relating to chunk updates.

Having said that I’m not actually sure it’s fair to call this iteration, as while it does have localized memory access, that access is still random, and most certainly isn’t iteration if we’re using a floodfill approach.

3. Disk serialization is proportional with chunk updates.

There’s no use persisting unchanged data. With a virtual memory based approach and a timing based persistence engine ( e.g. try to ensure anything dirty is persisted within 30s), the persistence work can be proportional with chunk updates instead of save events (if the entire world isn’t getting changed within the timing interval).

I just think (save events) * (world size) is a little to naive to be a useful assumption. Even a constant representing proportion of world changed between each save event could be useful.

In the extreme case we could just store a journal of the individual block updates, and in doing so avoid any large saving of chunks, and move it to sequential writing I/O (since initial load and final save can be done outside of the main game loop, I ignore them).

4. Network IO – (chunk size) * (chunk updates) portion

This really isn’t fair. I don’t think anyone is sending an entire chunk across the wire because someone changed a block.

The real hit is the initial sending of each chunk, based on amount of players, view distance squared, and an amount of movement (if we’re tracking this over time).

The actual updates based on chunk updates most certainly aren’t iteration based, they’re not even really read/write based. If you produce the event stream as the simulation occurs on the server, then you’re not even producing an extra read from the world, you’re just writing some local variables out to an event stream.

Comments.

I think iteration in the critical parts of the engine (main loop) is something that largely can, and should be avoided.

IMHO the area which is responsible for the vast majority of unavoidable iteration is mesh generation, as you can’t re-use meshes or have some half baked representation sitting around. If we’re theorizing over a multiplayer server, then mesh generation isn’t even used.

Taking it to the extremes to avoid iteration:

– Disk serialization can be pushed to a journaled event stream approach. Any initial loading or final saving can be done outside of the main game loop.

– Physics can use lists of active blocks, making it not iteration based.

– Lighting once initialized (can be done out of main game loop) is only changed based on lighting chunk updates and isn’t iteration based.

I’m not completely decided on Network IO. The “(chunk size) * (chunk updates)” part isn’t valid, but there’s still a copy of the world that needs to get shipped around to new players, plus chunks based on player movement. My completely unjustified gut instinct here is that since we’re limited here based on Network I/O, I just can’t imagine cpu/memory based iteration being the limiting factor.

I propose iteration really isn’t as important, and should be actively avoided instead of embraced (just to sound a bit more controversial). Having said that, and even though I believe the article heavily overstates iteration, based on your measurements I have a feeling you’re still right.

I’m now actively coding a interval tree based chunk so I can test this for myself and get some real world performance for my situation.

Random note: On the top level of the virtual memory approach (chunk lookup), have you thought about just using a fixed size array (based on view distance ^3) instead of a fixed size array and a bit of modulus? For something so regularly structured using a hash function seems unnecessary (I personally got significant performance degradation when I tried out a hashmap). This could actually be adding a large constant factor on to all of your random reads.

Thanks for the reply! I generally agree with your points. I guess I did play up the controversy a bit much 🙂 I apologize in advance for those formulas, since they are really intended to be semi-rough estimates — not exact measurements — and that some of them may actually be outright wrong in general. However, even though I do acknowledge that they are probably not 100% precise, I believe that they support the point adequately, and that at least this style of analysis is useful for getting the ball rolling toward a more accurate understanding of what is actually going on!

I would also like to add that you are especially correct about physics updates. Clearly it is crazy to hit every voxel in the entire world when you need to tick a frame. Regarding network IO, there is an additional advantage to using interval trees which is that your chunks are already basically run-length encoded, so sending them over the network can be greatly simplified. But for me, the greatest puzzle of all is how to optimally deal with torches? Minecraft uses a bounded-breadth-first-search to compute light intensity, but I am quite convinced that this can be much improved.

And finally regarding your random note: The hash function I use is a simple bit-interleave. It has the advantage that it ensures good locality of reference, so that nearby chunks will on average get stored in nearby positions in the hash table. (See wiki: http://en.wikipedia.org/wiki/Z-order_curve)

Controversy makes it more fun :p It kind of makes me chuckle, warring about iterating vs. random access.

On network IO, I get your point there now. I read that the first time then completely forgot about it, my bad.

Thanks for clarifying the Z-Order curve, with that (cheap) hash function it ends up just being a better organized version of the fixed array, no downsides.

On the torches…

I implemented breadth first search for adding/removing and left it at that. You’re clearly better trained mathematically and have superior intuition on this kind of stuff, why are you convinced that the breadth first search can be improved?

I can see inefficiencies in my approach, but they’re also awkward to remove, and based on back of the envelope calculations, they at most will only remove a third of operations.

When spreading light from a light source, you can’t avoid visiting every block that you light, so it becomes a matter of doing so with the least amount of effort and wasted work. With a breadth first approach, this largely consists of the search turning in on itself when it doesn’t need to and trying to spread like back towards the source.

In the easiest case this is wasted effort (an open expanse), in the worst case (1 pixel wide tunnels) this is necessary effort.

Do you feel there’s an entirely different approach possible? Or simply some significant improvements to the search? As I can’t see any improvements to the worst case situation, my intuition isn’t really giving me anything I can see is worth actively pursuing.

Typoed my last paragraph but can’t edit.

Random note: On the top level of the virtual memory approach (chunk lookup), have you thought about just using a fixed size array (based on view distance ^3) and some modulus instead of the hashmap? For something so regularly structured using a hash function seems unnecessary (I personally got significant performance degradation when I tried out a hashmap). This could actually be adding a significant constant factor on to all of your random reads.

I have thought about efficient storage for voxel data a while ago, too and came to roughly the same conclusions.

I planned the RLE compression a little different though. Storing the start of a run instead of the length is obviously better than storing the run length for the reasons you pointed out. But instead of storing it in a tree I would just keep it in an array to avoid memory trashing (which could be avoided by pooling of course) and also to avoid maintaining tree balance for every write.

Instead I would collect the changes to a chunk in an extra array/vector for that chunk and then rebuild the RLE for the chunk after we are done changing stuff. I want to be able to remove or place a large amount of voxels at once so writing is constant time until you commit with this system.

Reading between writes would be either

– complicated if we always want to get the most recent data

– very easy and fast if the premodified data is accurate enough and all we need (for example if we are applying changes to large areas at once and just need to look up preexisting conditions in the world)

– or we could just force a commit before a read (which WILL kill performance).

Depending on use case all of these could be useful. So again I don’t want to limit myself to single, isolated writes but to changing large areas at once. Bombs or water simulation come to mind here.

I am not sure what the best way to flatten 3D to 1D would be, tough. In which order would you store the blocks? You have to choose an axis to do this and all iterations along this axis will be cache efficient, while iterations along the other axisis (axes?, hmmm) will skip through the array and might lead to cache misses, depending on chunk size and cache size. Since storing the data RLE encoded is very space efficient, maybe one RLE per axis might make sense, but that should be determined by profiling. After all, all RLE representations need to be updated after a change.

Thank you, and nice points! I will say that working with intervals is more complicated than pages, but thankfully this complexity can be pretty easily contained within the data structure interface itself.

The last issue you raise is indeed a bit tricky. What I ended up doing was making my iterations go along x-y-z order in order to take advantage of the layering of minecraft maps. This was also helpful in mesh generation, since there tend to be more horizontal than vertical faces on average for hilly terrain.

One thing which I have considered (but haven’t yet tested) is using range-trees instead of interval trees to store the data in the chunks. I think that there could potentially be some big advantages to using to using a higher dimensional representation, but I still need to work out the details. My current motivation is largely driven by compression concerns, and this particular strategy could be thought of as something like a “first attempt” at naive compressive data structures.

Very apt timing, I am in the midst of writing up a clean voxel engine for use in Minecraft! See: https://github.com/SpoutDev/Spout

But enough self-promotion.

I’m not quite sure I agree with your original premise.

>Claim: The main bottleneck in voxel engines is iteration — not random access reads and writes!

Let’s examine how you came to this conclusion:

1. Block Placement – we both agree here, this is not a bottleneck, or even significant.

2. Collision Check – Again, agree here.

3. Picking – agree

4. Lighting does use iteration for block sources of light, unlike the previous poster, I believe this is a fair point.

5. Physics updates do not use iteration. At least not iteration of blocks. That would be hilariously bad for performance. Physics only happens when a block is updated (leading to the floating sand falling when you place a block in minecraft) or randomly in a chunk near a player, when it gets updated for growth. The growth logic doesn’t iterate all blocks, it randomly selects a small subset, and updates those. (Only 20 blocks in the official Minecraft). Hardly iteration.

6. Mesh generation – agree here.

7. I disagree here again. Disk serialization is not a bottleneck, because it *should* be happening outside of of the main game thread. Optimizing this would not speed the game up. (This is multithreaded in the official Minecraft as of 1.8)

8. And disagree here too. Network serialization, like disk serialization, should exclusively occur on a parallel thread, and is not responsible for bottlenecks.

Adjusting your analysis by removing the points where I disagree, We see iteration is much much less important.

I admit though, I am cheating. I have spent much time mucking around in the Minecraft code, I can tell you, physics is the number one bottleneck in the engine, and mobs are a close second.

It only updates 20 blocks at a time?! That’s amazing that it works! How does redstone fit into the picture?

Anyway, I still think that it is worth trying to optimize physics code for the more general case. If for nothing else, having a faster physics system lets you do interesting and complex cellular automata. For example, the finite liquid mod: http://www.youtube.com/watch?v=XlnUXXzc1Fg

>It only updates 20 blocks at a time?!

20 blocks per chunk, in 15×15 chunks (with the player in the central chunk). This happens once per tick, 20 ticks per second.

>How does redstone fit into the picture?

Redstone is like sand or gravel, it updates when a neighbor block changes. 😉

>Anyway, I still think that it is worth trying to optimize physics code for the more general case.

I wasn’t trying to say optimization of physics is bad – it still is the #1 slowdown. 😉

Maybe I’m missing something. How would adding or removing a light perform iteration? Surely flood fill/breadth first search isn’t considered iteration, it ends up walking in directions based on the constraining walls/solid blocks.

Adding or removing light doesn’t, but day/night cycles do iterate.

I don’t think it’s been done like that for a very long time.

I based my original lighting model off a tweet from Notch, and that’s when he proposed using a lighting texture (u being blocklight, v being sunlight). Day/night is achieved through switching the lighting texture.

Day/night no longer has any recalculations.

You are correct, but it only changed recently. Minecraft 1.81 was the release that changed the light from iteration to the new texture.

Hi, random comment here. I wrote a voxel mesh generator in Python and numpy. numpy lets you do arithmetic and statistical operations on large arrays of data, so I thought it would be fun to use it for the Block arrays. Part of generating the mesh is comparing a block against its six neighbors. I found that I can compare a slice of the Blocks array against its neighboring slice (a la Blocks[1:] != Blocks[:-1]), and then reuse that computation for the adjacent slice as well, effectively reducing the number of neighbor comparisons from six to three. The tradeoff is having three Blocks-sized temporary arrays to hold the results, and the bummer is that I can’t skip air blocks.

Theoretically, its performance is roughly equal to a mesh generator that skips air blocks but has to compare a solid block against all six neighbors. I think this is kind of cute.

Thanks for the links to SeaOfMemes. I hadn’t run across this blog before. I’ll be reading it now.

The only mostly iteration-type of access operation I can think of is disk serialization which is not performance critical at all. Lighting update and mesh generation use random or semi-random access, definitely not linear. Day/night transition is easily done in shader, if you didn’t mix sunlight and artificial light channels together for some weird reason. I have nothing to say about physics, because I use polygon-based PhysX engine.

Hi, and thanks for the message! For some reason your comment got stuck in the spam filter, so I only just noticed it.

Regarding lighting: Yes, the estimates there are pretty naive, and I think if you directly use the Minecraft method for lighting torches it will turn into a random access iteration. Also sunlight is generally much easier, and you are correct that shadow mapping is probably a better solution. In fact, I actually implemented this method in the following voxel engine :

https://github.com/mikolalysenko/MineHack

However, I don’t think this is really the end of the story. In particular, I believe that you can probably speed up torch lighting by grouping similar voxels together. If your data structure lets you combine contiguous regions together and do fast proximity queries, then you reduce the number of voxels you visit when doing the breadth first search. In principle, something like this should work for interval trees, though you would need to add the restriction that each run must be topologically connected.

As for disk and network serialization, I only added those items to the table for the sake of completeness. You are correct that disk IO is not a major item and it isn’t even a dominant term in the above calculations, though network serialization can get a bit heavy if you have many players.

The main reason for preferring fast iteration is to optimize cellular-automata-style physics updates. (For example: finite liquid mod.) This is not really the same thing as rigid body dynamics, which you can handle using some conventional solver, like PhysX or Bullet. If you are not implementing this kind of feature, then you can probably just stick with whatever you are doing and ignore all this stuff. But performing cellular automata updates is very expensive for large worlds, and so it makes sense to look for ways to reduce the overall complexity. I only know a few strategies that are effective here (though maybe you know more! If so, then please write something):

1. Prune out cells that don’t need to be updated.

2. Memoize past states (ala Hash life) to take larger time steps.

3. Group similar cells together, and then tick them all at once.

The first item is highly dependent on the details of the cellular automata, and basically motivates picking rules that eventually stabilize and have bounded growth. Item 2 is a bit of a joke, since memoization tends to consume exponentially large blobs of memory, scales poorly with cell types and is perhaps of dubious use anyway when your time step is fixed (as it must be in an interactive game). The last item interval trees can help with.

Finally, there are meshing issues. Interval trees help a little bit over naive meshing, but again I believe it is possible to do much better. As a starting point, merging adjacent faces along multiple dimensions would help a lot (and doing this via a naive/greedy algorithm shouldn’t be too difficult, just march along each axis and maintain a front with the current mesh). However, I think that a better solution would be to use a data structure that takes care of this for you.

One direction which I have been thinking about, but haven’t yet had the opportunity to try, is to replace voxels with a mesh of prismatic cells. This is like taking the idea of intervals to an extreme, and grouping them along multiple axes. You would have to do more work up front when performing modifications, and random writes/reads would be a bit more expensive. However, you save by making your collision and rendering meshes for free, and it should also help with torches, since you can basically reduce the cost of traversing a collection of air blocks to only iterating over the set of all bounding prisms that touch that cell. The argument for faster physics updates would also apply.

Of course, I consider this post mostly a rough draft. If you have anything to add about the analysis or any new ideas for data structures/techniques, I think it would be great if you shared it. Part of my reason for writing this up was to organize my own thoughts, and also to draw attention to the fact that there is a lot of room for improvement here. It has been wonderful hearing back from so many people!

Hi!

This is an extremely interesting article! But as I am not as good of a coder than the rest of the commenters, some of the things you mentioned baffled me:

you say “iterate over cells in groups” and I agree, but how exactly does one update *all* values in an array in for example a high level language like Java? At the core it feels like one still has to iterate through each cell and update its value. The only kind of “updating in groups” I am aware of is in low level coding where it is more beneficial to call a copy function that transfers for example 32 bytes at a time instead of 4. What kind of data structure and updating are you implying in this case?

Also, when you are mentioning storing the world in RLE packed form in an interval tree, i assume you are still dividing the world into minecraft-style x*y*z cell chunks that you RLE pack in a 1D-list and generate the interval tree from? could you shed some light on this?

Thanks again, this was truly an inspiring thing to read.

Hi jjp,

What I meant by “update in groups” is that in a run-length-encoded representation you can iterate over the voxels at the run level instead of at the individual voxel level. This can be used to speed up meshing and other bulk operations on voxels.

-Mik

Awesome article, thanks for sharing your findings. I was sifting through the benchmark code and comments and noticed you used a Z curve for chunk hashes, but not for flattening indexes within chunks. Have you tried this as well? It should be fairly straightforward: just interleave the bits of the i, j, and k indexes. Granted, in place of 3 multiplications (which can be rewritten as bit shifts themselves) you will have 3N bit shifts (for indexes ranging up to N bits), but the advantage is better spatial coherency which could give you less entries in your interval tree. Any thoughts?

Using a Z-curve ordering and an iterator built around that logic to iterate the Octree would be a more fair comparison, it would essentially eliminate all the ‘drill-down’ traversing of the Octree and make iteration nearly flat. Your code is more a lesson in how not to use an Octree then a sound argument against it.

Also I find the map-simulation to be a bit biased as well, horizontally layering the world when Z is 3rd in your traversal order is being very gentle on your traverses because it gives you 1 traverse per layer, while choosing an odd number of layers guarantees you break virtually every possible octant by having the layer boundaries never falling on a power of 2.

I really desire to save this particular article,

“An Analysis of Minecraft-like Engines | 0 FPS” Bamboo Shades on

my own internet site. Would you mind in case I reallydo?

Regards -Woodrow

Hey there! I just made a video about the programming challenges of these types of games… and this article was one of my sources.

Hi,

I am trying to implement voxels in my game, but it is a bit of a different environment from the traditional Minecraft style game. What I am trying to achieve is something like the game Miner Wars 2081. Basically, the game itself is not purely voxels, but asteroids are voxel objects. I’d like to make these asteroids fairly numerous and randomly generated (even when the player is not moving, such as an asteroid flying by), so saving these objects isn’t as important to me as generating them quickly and efficiently. Second, I want to allow the player a wide array of destructive weapons. Slicing asteroids in half, melting holes straight through them, or simply blowing them to bits. So I believe I also need a physics method that can break voxel objects into smaller voxel objects (such as cutting an asteroid into two halves). I’m not sure if that falls under cellular automata or not, but it sounds like it could have some similarities.

Given my circumstances, I was wondering if you might have some suggestions about what the best way to approach this might be? My intuition tells me that having fast mesh generation and regeneration will be critical. I should also mention that I am not using blocky meshes, but rather marching cubes, as I am trying to achieve similar graphics to that of Miner Wars 2081. I am quite new to voxels, and before finding your article, I was naively planning on implementing octrees.

Interesting article, thanks for sharing!

I’m currently experimenting with data structures to store Minecraft worlds and found that octrees perform pretty well in terms of memory usage. For an actual Minecraft world (loaded with the mNBT library) it uses less than 20% the amount of memory an array would use.

The pay-off is slightly slower reading (avg 0.12µs on my machine) and much slower writing (avg 57µs, loading 1 MC chunk takes avg 160ms = 10 frames).

Despite what the blog entry you linked says, it is possible to iterate efficiently through an octree. If you only need to access a blocks neighbor there’s a 50% chance that you only need to look at the node your current block is in (which is as fast as an array lookup), a 75% chance that you don’t need to look at more than two depths and so on.

Note: There are very different ways one can implement an octree. I’ve tried out several and many are much slower and take up more memory than an array.

Thanks for the suggestion of using RLE! I add it to the list of experiments.

First of all, great article! It has become a standard reference for this

topic, in my opinion. I’ve two questions.

> We can use the fact to iterate over the voxel set in units of runs instead

> of units of pixels, and can easily be extended to walk runs of Moore

> neighborhoods as well.

Could you explain how to efficiently extend the iteration to a Moore

neighborhood?

> An interval tree is just an ordinary binary tree, where the key of each node

> is the start of a run and the value is the coordinate of the run.

What do you mean when you say the value is the coordinate? Isn’t the value a

pair of the length of the run and the block material?

It’s going to be finish of mine day, but before end I

am reading this wonderful article to increase my knowledge.

I just did some research on this topic, and I found another article that might be of relevance to people interested in the topic. http://www.ectri.org/YRS15/Documents/Papers&presentations/Session%203B%20Road%20Safety%20(Transport%20Safety),%20Tutor%20Antonino%20TRIPODI/Papers/High_Performance_Database_for_Automated_World_Modeling_FUNK.pdf If I understand the conclusion correctly, a hashed octree could provide many of the same benefits as the RLE proposed here, with an added benefit of allowing for incredibly high resolution.

The link seems not work. But what is a hashed octree? Build an octree and a hashtable for the nodes at some layer at the same time? That would take up more space right?